How to use Tensorflow Lite GPU support for python code · Issue #40706 · tensorflow/tensorflow · GitHub

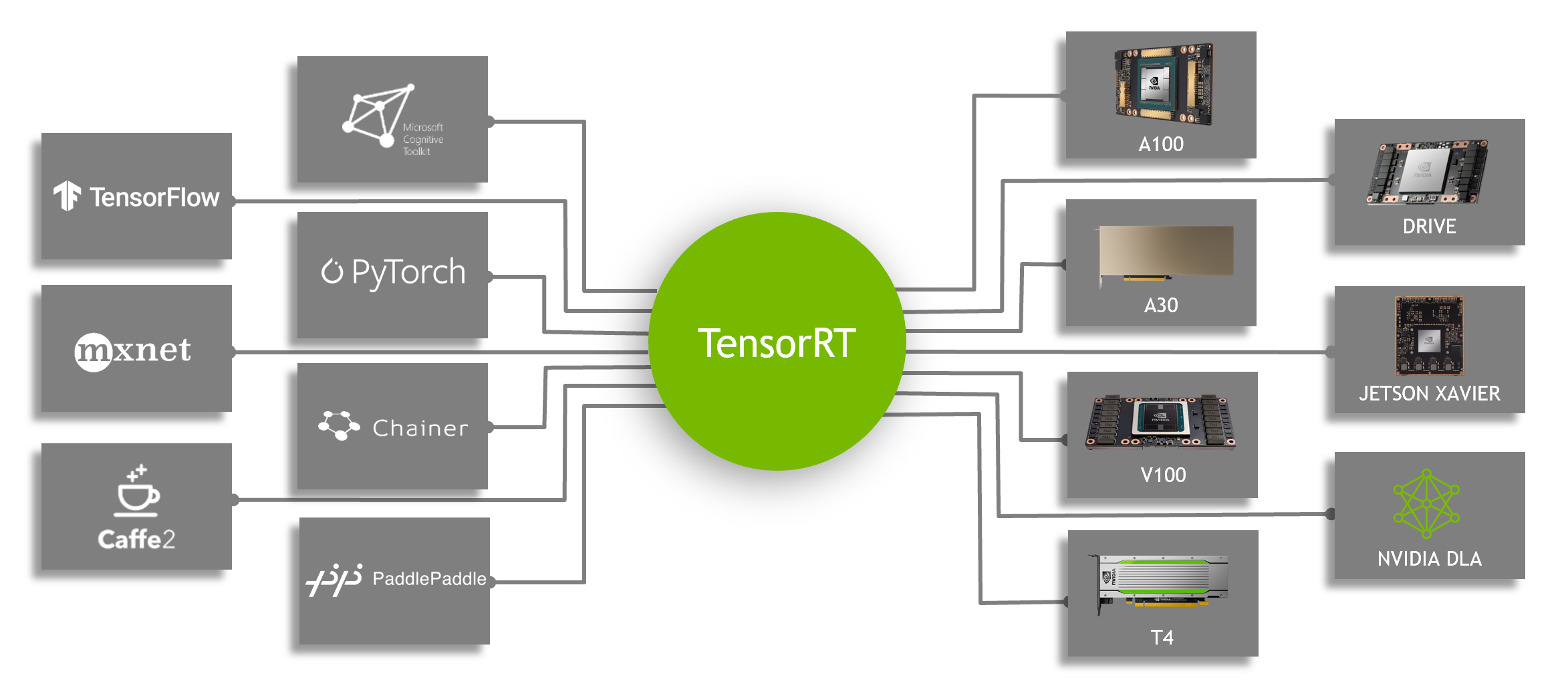

Speeding Up Deep Learning Inference Using TensorFlow, ONNX, and NVIDIA TensorRT | NVIDIA Technical Blog

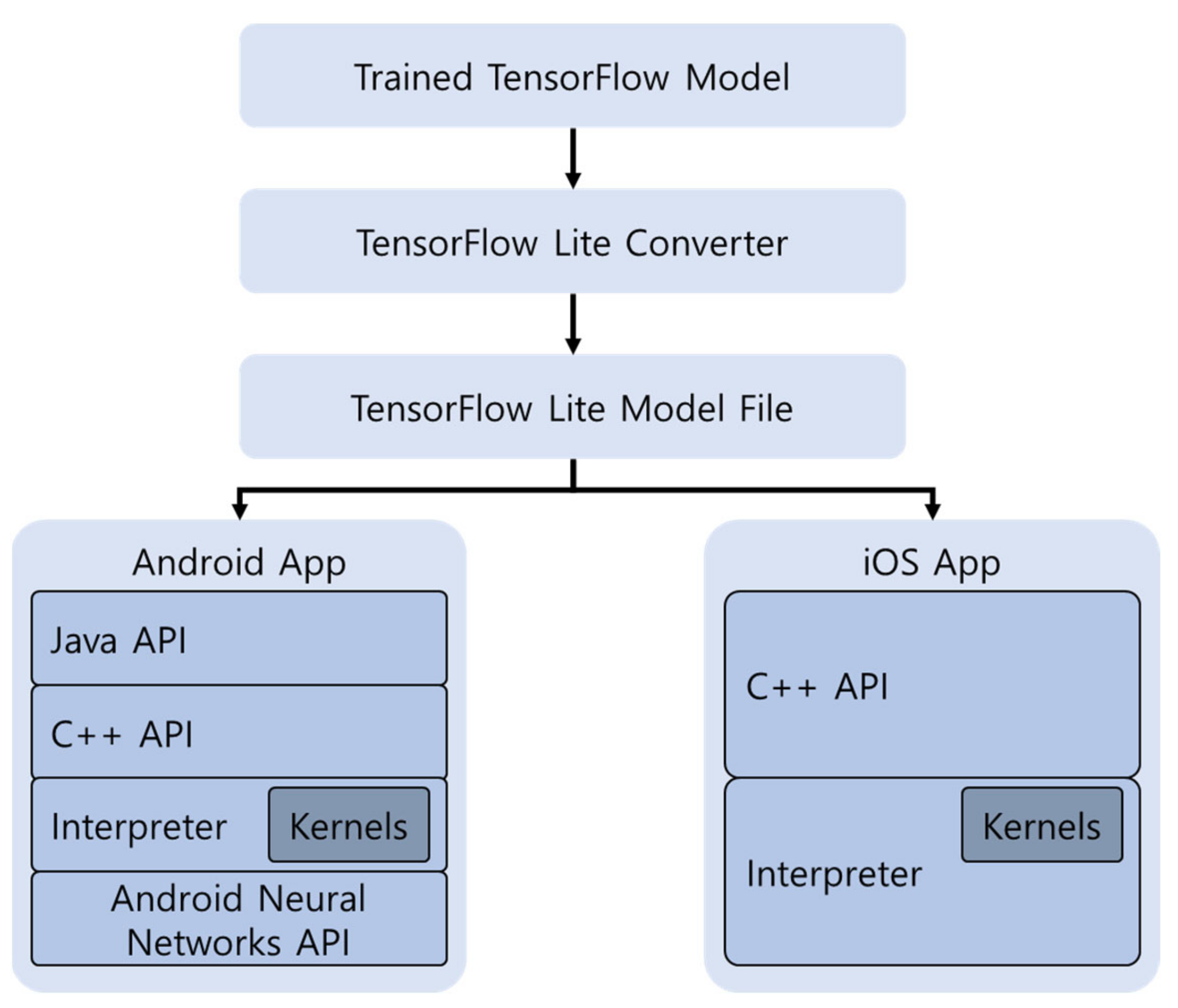

Loading and running custom TensorFlow Lite models with AI Benchmark... | Download Scientific Diagram

Applied Sciences | Free Full-Text | A Deep Learning Framework Performance Evaluation to Use YOLO in Nvidia Jetson Platform

TensorFlow Lite Core ML delegate enables faster inference on iPhones and iPads — The TensorFlow Blog

GitHub - terryky/tflite_gles_app: GPU accelerated deep learning inference applications for RaspberryPi / JetsonNano / Linux PC using TensorflowLite GPUDelegate / TensorRT

TensorFlow on Twitter: "Deploy a custom ML model to mobile 📲 In this #GoogleIO session you'll learn how to: 🟠 Integrate ML in your mobile apps 🟠 Build custom TensorFlow Lite models

TensorflowLite Android OpenCL delegate may produce invalid Conv2D result · Issue #45974 · tensorflow/tensorflow · GitHub

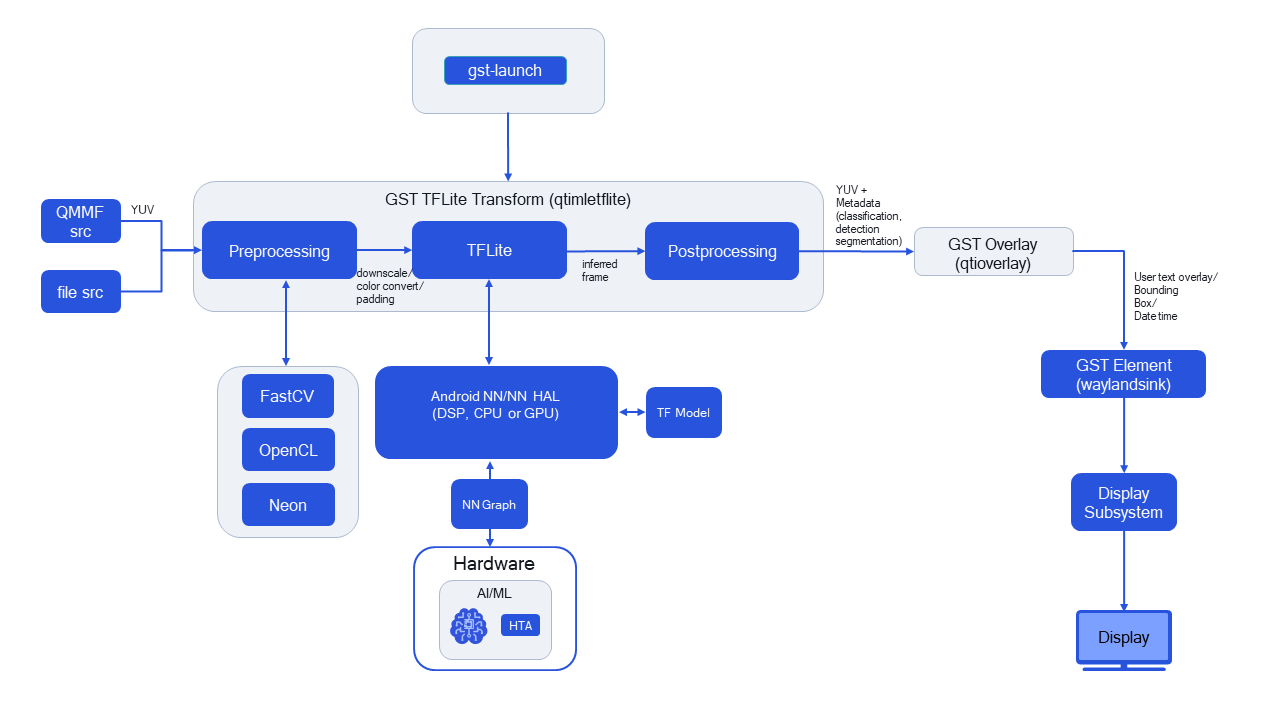

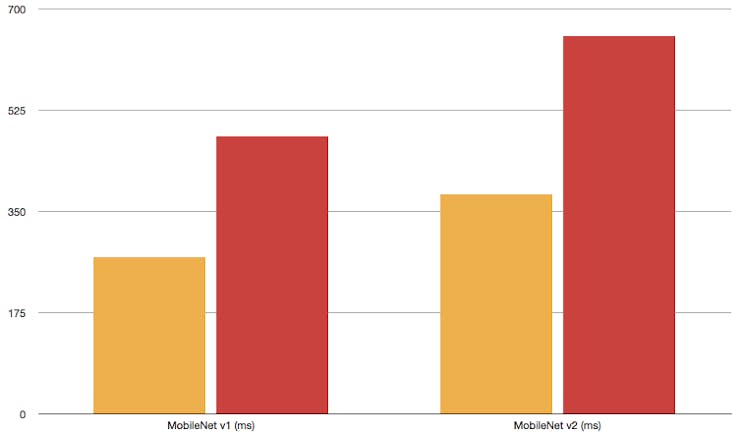

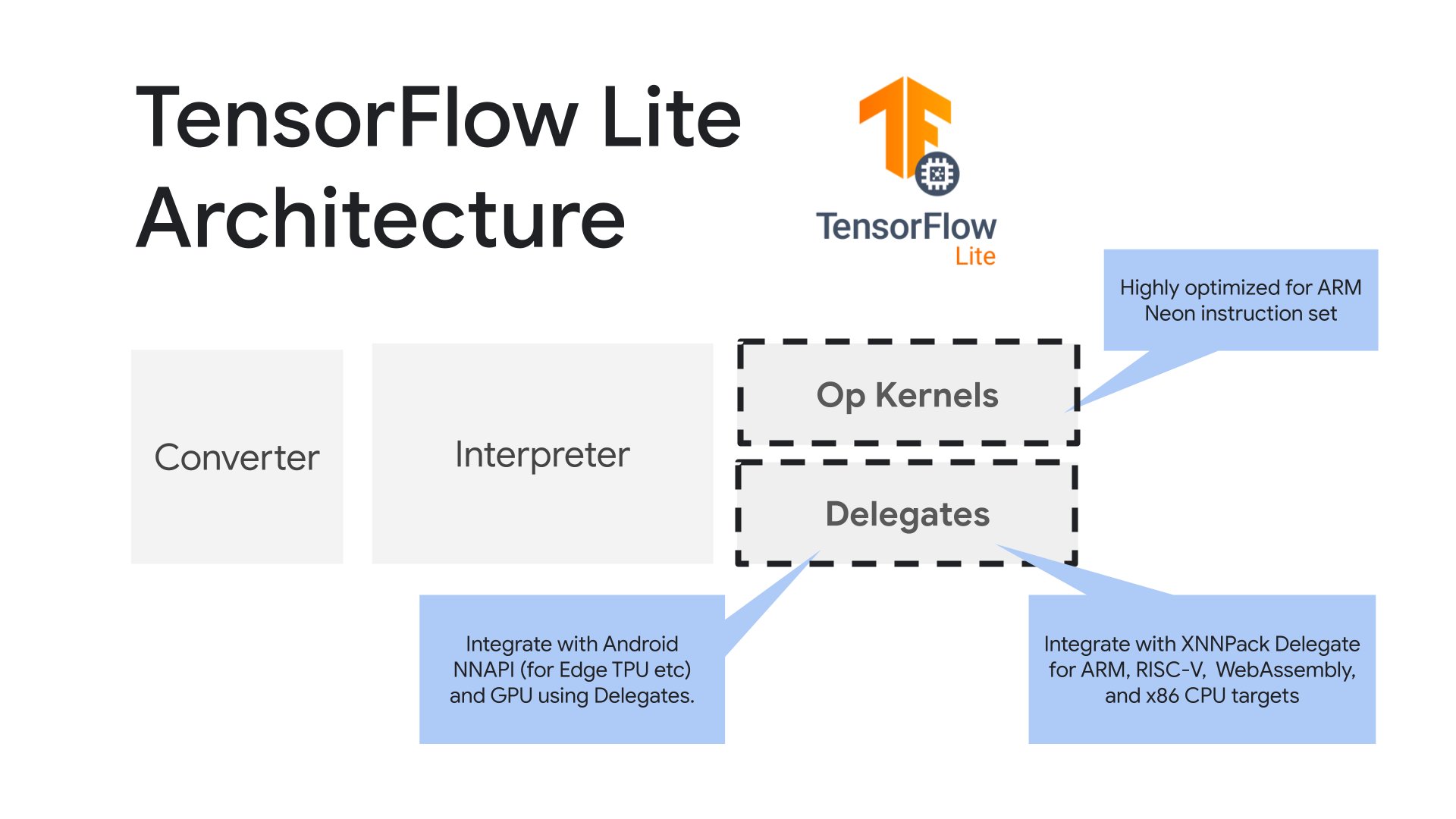

Optimizing Machine Learning on MaaXBoard Part 1: Delegates - Blog - Single-Board Computers - element14 Community